How to get user feedback: A guide to user testing

As the founders and entrepreneurs in our community already know, getting user feedback is a make-or-break move when it comes to launching products. But many of us might not be familiar with user testing methods or know exactly what and how we should be testing in the first place. This guide will give an overview of how user testing works, what kind of user feedback we should be collecting, and how to do it. Let’s get started with the basics.

User testing is an umbrella term for observing how real people use and respond to a prototype of a product or service. Different methods of collecting user feedback can fit into every stage of the product design process. Usability testing is just one type of user testing specifically geared toward assessing the functional ease and effectiveness of a user experience.

The purpose of user testing tends to be focused on one or more of three key objectives, familiar to any startup owner who is looking to validate their thinking:

- Desirability: Will people like it? Do people resonate with our idea? Does it speak to their values in a meaningful way?

- Viability: Will it be successful? Does our idea address an unmet need or improve upon an existing solution in the market?

- Feasibility: Can we build it? Do we have the technological and operational capabilities to deliver on user expectations of our product or service?

Testing product ideas directly with users, whether it’s in the blue-sky exploration stage or getting ready to launch, can reveal opportunities and lead to insights that you might otherwise overlook when you’re in the weeds of your design process.

When should I get user feedback on my design?

You should get feedback early and often. The product design and development process is built for collaboration, and users are the most important members of your design team. Collecting user feedback can happen with prototypes at every stage because anything can be a prototype: paper wireframes, flat screens, even role play, aka “bodystorming.”

Rapid prototyping tools like Uizard make it easy to create interactive flows of your key screens and functionality. What’s most important to consider is the level of fidelity, which means how much detail, like copy, imagery, or color, is included in your design. Let’s take a closer look at how user testing fits in the beginning, middle, and end phases of design.

Concept validation

Low-fidelity prototypes, like basic sketches, wireframes (paper or digital), and storyboards will give you a read on whether you’re heading in the right direction before you’ve committed too many resources to pursuing a single path. At this stage, you’re likely to be exploring a few big ideas, and the feedback you receive will help you assess and prioritize which ones have the most potential in the eyes of your users.

This is also an ideal moment in the design process to invite users into co-creation, meaning giving them the opportunity to work alongside you, contributing their own ideas, and mocking up their own low-fidelity prototypes. Similarly, activities like card-sorting are useful for working out your information architecture and product taxonomy. User testing at this early stage helps to ground your design decisions in real customer insights that set you up for success in the long run.

Design iteration

As you flesh out your thinking into more developed user flows, user testing with medium-fidelity prototypes is instrumental in moving your designs forward through iterations. At this stage, prototypes are likely to consist of flat screens linked by select interactive “hot spots” on the page that mimics a user’s path through a website or app.

You’ll want to keep a tight focus on testing your key features and functionalities; your main objective is to spot gaps in the experience. Medium-fidelity prototypes should include just enough detail to support the user in accomplishing the task at hand, whether it’s buying movie tickets, checking account balances, or comparing designer handbag prices. This can be done through content and messaging, icons and buttons, and navigation schemes. Visual design elements that look too highly polished can distract users from providing the functional feedback that will help you develop your next iteration.

Usability testing

As we noted earlier, the goal of usability testing is to observe how effectively a user is able to move through the end-to-end experience of using your website or app. To collect the most comprehensive feedback at this stage, you’ll need to be close to having a fully-realized high-fidelity prototype. The experience should feel as close to, if not indistinguishable from, real as possible.

You’ll want to address a few key questions during usability testing. First, you’ll want to find out what brought a user to your site or app and what they were hoping to accomplish. Then, you’ll want to zoom in on what motivates or inhibits them from taking or completing an action. Finally, you’ll want to get a sense of their overall satisfaction, whether they found the overall experience pleasant.

What are some user testing methods?

There are a few basic frames for coming up with user testing methods, and they’re not mutually exclusive. In fact, with every user test you’ve planned into your design process, it’s a good idea to mix and match among a few methods so you can capture a rich array of user feedback.

- Exploratory user testing methods are open-ended and can be participatory, like the co-creation and “bodystorming” we mentioned earlier. The goal is to solicit broad-ranging qualitative feedback that might reveal unexpected or unusual insights.

- Comparative user testing methods are generally oriented toward a quantitative evaluation of two (or more) interfaces, measuring efficacy around task completion, time spent on task, and error rates. However, qualitative comparative tests can be used during concept validation to evaluate the pros and cons of different directions.

- Assessment user testing methods, like usability testing, are focused on a holistic understanding of user satisfaction and functional ease of use.

What sets these methods apart from other forms of capturing user feedback, like A/B testing, surveys, or software user acceptance testing, is that they help to answer why a user made a certain choice or reacted in a certain way. This isn’t to diminish the value of conducting other types of user tests; it’s just to point out there are different objectives, and therefore different data behind each method.

How do I do user testing?

Though you’re now aware that there are lots of different ways to go about getting user feedback, there are some fundamental steps to planning and executing your user testing strategy that apply regardless of design stage or testing method.

1. Figure out what you want to test. Think about the specific questions you need to be answered at this stage to move your design process forward.

2. Develop your prototype to answer these questions. Especially in the concept validation and development stages, it’s best to keep your prototypes lean and focused on specific tasks or features. Build only what is essential for what you need to learn.

3. Figure out who your test users will be. Assuming you have multiple user personas, you should plan to test with a set of users who represent the different needs, goals, and perspectives of each of them. Also consider testing with some folks outside of your target to make sure you’re capturing a more diverse, real-world array of perspectives.

Here are some helpful best practices for creating a recruiting screener questionnaire to find test participants.

4. Organize your testing logistics. Decide if you’d like the test to be moderated or unmoderated. The presence of a moderator is necessary when you want to ask open-ended or follow-up questions. Unmoderated tests are useful for task analysis when you’re observing and measuring behavior patterns. Most importantly, you’ll need to decide whether to do in-person or remote testing. (You can do both!) Here are a few pros and cons of each option:

- In-person user testing pros: The ability to read body language, observe non-verbal cues and influence of environmental factors; easier to build rapport

- In-person user testing cons: Can be more challenging to coordinate schedules; more expensive; potential environmental distractions; limited by geographical proximity

- Remote testing pros: Much easier to achieve scale; easier to find a higher volume of qualified participants; convenient, efficient, more cost-effective

- Remote testing cons: Misses the richness of in-person interactions; technical difficulties; harder to intervene if things are going off-course

5. Work on developing your test script while you’re recruiting and screening participants. First, make a list of tasks you want a user to complete in the testing session. Then list the questions you want to ask. Map out a rough script of the end-to-end test, including your introduction, the background questions you’ll ask, an outline of the tasks/scenarios, and how you’ll wrap up. With a colleague, “rehearse” a few times so you get a sense of how long each part of the test should take. Keep the script handy during your test session to stay focused and avoid forgetting anything.

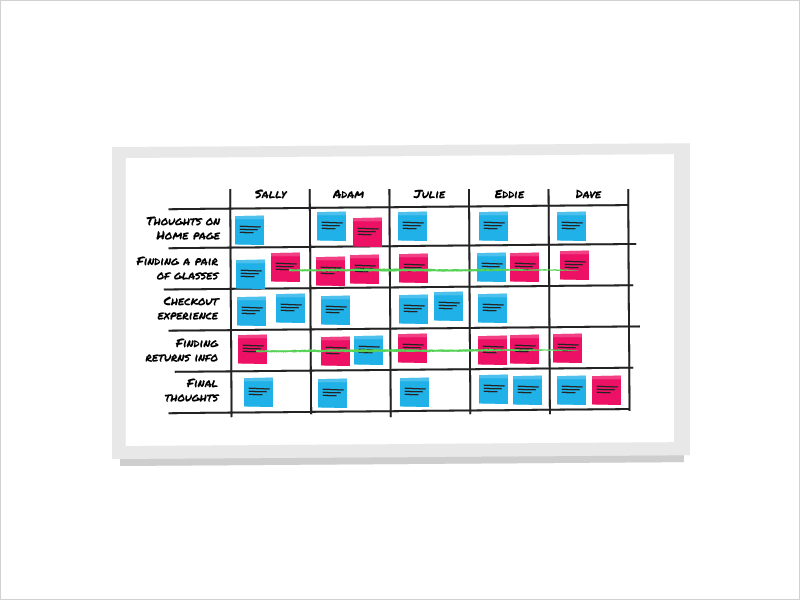

6. Create a framework for capturing and processing user feedback. Make sure you invite a dedicated notetaker and consider recording the session so you can go back to review it later. A simple way to capture feedback is to prepare a spreadsheet or whiteboard that lists the test tasks down on the side, the test participants across the top, and then add notes in each block. You can even color-code positive, negative, and neutral feedback so you can easily spot patterns.

A simple guide for whiteboard note-taking (Source: Si digital)

Best practices for getting useful user feedback

Our goal with this guide was to create an accessible overview that removed any confusion or intimidation around how to gather user feedback. Hopefully, by this point, you’re inspired to start integrating user testing in your product design process right away. Before you go, there are a few things to keep in mind to ensure you’re getting the most valuable user feedback.

First, remember to stay neutral. That’s one good reason to use a moderator! Avoid leading questions and any other cues in your tone or body language that might nudge a user to answer or behave in a certain way. Users are prone to wanting to please and to avoid insulting or offending. Lower the stakes by telling users you’re not the designer, they won’t hurt your feelings, and to feel comfortable sharing their honest opinions. Always follow-up with questions that help you get to the core “why.”

This should go without saying, but be respectful of the user’s time. Make sure everything works in advance. Test your tech and connections. Confirm dates, times, and locations with your participants. Rehearse your test script. Double- and triple-check your prototype to make sure it’s working as it needs to, clean up typos, and fix any bugs. Even if things don’t totally go according to plan — because they won’t! — be as prepared as you can so you’re able to stay focused and respond at the moment.

Finally, resist the urge to apologize, explain, or become defensive if the user’s feedback isn’t favorable. You are there to listen and observe. There will be time to process afterward, and it will be more useful to look at the feedback holistically after you’ve finished testing with all users. That's when you’ll be able to spot patterns and make sense of what you’ve learned.

Remember to keep an open mind. It can be hard to hear criticism of your ideas and hard work. Think of prototypes as experiments, which, to paraphrase Thomas Edison, are just a means of finding all the ways that don’t work so you can get to the one that does. Seeking out user feedback is one of the most powerful ways to shape a product’s development, and inviting users into the process will ensure they’re as invested in your success as you are.

Uizard is an easy-to-use tool to help you design prototypes, test your ideas, and iterate your way forward. Sign up to Uizard for free, and turn your ideas into interactive prototypes in minutes.